The NCMEC recently published its statistics and figures for 2023, and something that caught my eye was this blurb:

In 2023, NCMEC engaged Concentrix, a customer experience solutions and technology company, to conduct an independent audit of the hashes on our list. They were tasked with verifying that all the unique hashes corresponded to images and videos that were CSAM and met the U.S. federal legal definition of child pornography. The audit, the first of its kind for any hash list, found that 99.99% of the images and videos reviewed were verified as containing CSAM.

Learn more about the audit process and their findings here.

I checked out the PDF, and something caught my eye.

KEY FINDINGS

David J. Slavinsky, Concentrix’ primary site director during the audit made the

following conclusions upon completion of the Concentrix audit:

- Concentrix moderators completed two independent reviews of each of the 538,922 images and videos on NCMEC’s CSAM Hash List.

- Concentrix’s audit concluded that 99.99% of the images and videos met the federal definition of Child Pornography under 18 U.S.C. § 2256(8).

- Concentrix moderators identified 59 exceptions during their audit as follows:

a. 50 images and/or videos were deemed not to meet the federal definition of Child Pornography.

b. 5 images and/or videos were classified as a cartoon/anime depicting sexually exploitative content involving a child, but not meeting the federal definition of Child Pornography.

c. 2 videos were damaged and could not be played.

d. 2 images and/or videos contained sexual content, but the Concentrix moderators could not distinguish whether a child or an adult was depicted in the imagery.

This basically means that, of the 538,922 images whose hashes are included, only 5 of them were cartoon/anime. The audit was looking specifically for images that fell under 18 USC 2256(8), which cannot be read to include cartoons/anime within its definition criteria.

(8) “child pornography” means any visual depiction, including any photograph, film, video, picture, or computer or computer-generated image or picture, whether made or produced by electronic, mechanical, or other means, of sexually explicit conduct, where—

(A)

the production of such visual depiction involves the use of a minor engaging in sexually explicit conduct;

(B)

such visual depiction is a digital image, computer image, or computer-generated image that is, or is indistinguishable from, that of a minor engaging in sexually explicit conduct; or

(C)

such visual depiction has been created, adapted, or modified to appear that an identifiable minor is engaging in sexually explicit conduct.

. . .

(11)

the term “indistinguishable” used with respect to a depiction, means virtually indistinguishable, in that the depiction is such that an ordinary person viewing the depiction would conclude that the depiction is of an actual minor engaged in sexually explicit conduct. This definition does not apply to depictions that are drawings, cartoons, sculptures, or paintings depicting minors or adults.

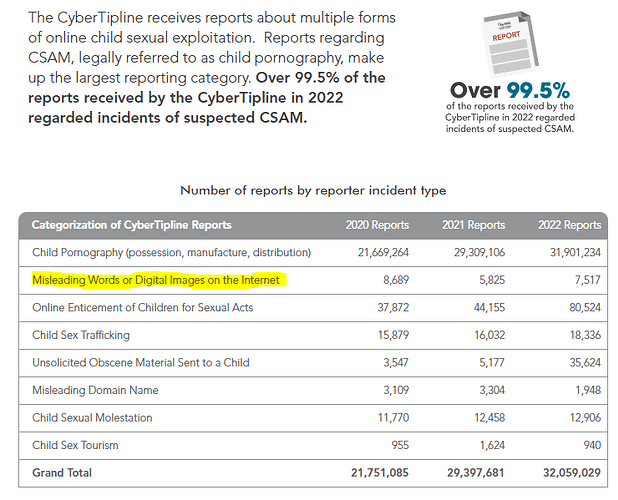

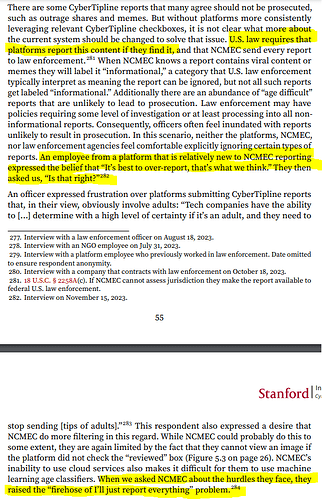

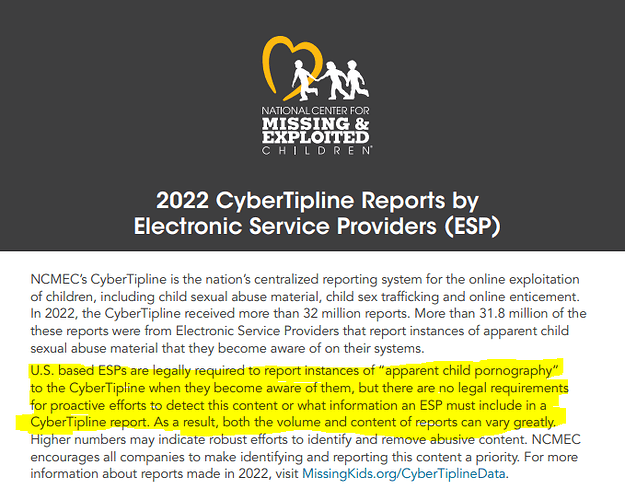

This is also something that aligned with a December 2023 Stanford study which explored the datasets used to train popular AI models to find CSAM.

Comprehensiveness of PhotoDNA: PhotoDNA is naturally limited to only known instances of CSAM, and has certain areas upon which it performs poorly. For example, based on keywords, significant amounts of illustrated cartoons depicting CSAM appear to be present in the dataset, but none of these resulted in PhotoDNA matches.

I think it’s interesting that the NCMEC brought in an outside firm to essentially perform the same task that they themselves ought to have done themselves. I suppose the external credibility is helpful, but I still would have preferred assurance that analysts were doing their jobs and ensuring that only content made from known victims would get in, not materials that are either ambiguous or deliberately do not fall under the scope and definition of 18 USC 2256.